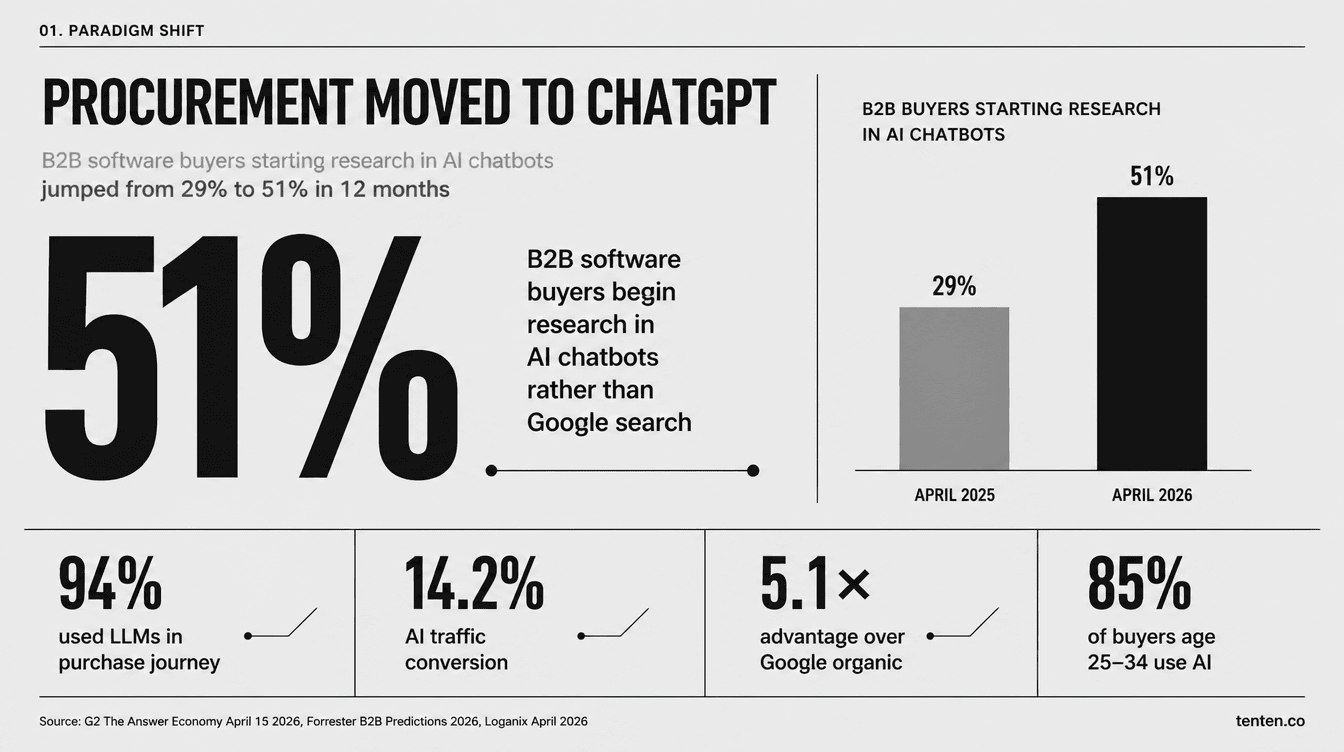

Taiwan's industrial PC and semiconductor manufacturers are losing pipeline they can't see, because the AI tools running their buyers' shortlists can't read PDF datasheets. G2 published The Answer Economy on April 15, 2026, surveying 1,076 B2B software buyers across North America, EMEA, and APAC. Fifty-one percent now start their software research in an AI chatbot rather than Google. Twelve months earlier, that number was 29%. ChatGPT alone holds 63% share of B2B AI chatbot research. Sixty-nine percent of buyers say AI guidance led them to a different vendor than they originally planned, and one in three bought from a company they had never heard of before AI surfaced it. This isn't hypothetical for Taiwan's hardware sector. It's happening right now to Advantech's competitors, to IEI's distributors, to TSMC's tooling suppliers.

This article is for engineering and marketing leaders at industrial hardware companies, including the Taiwan IPC giants and the long tail of mid-market vendors, who need to understand what just changed in the buyer journey and what their websites need to look like to participate in it. The technical answer is structured data. The strategic answer is harder, because it requires retiring some assumptions that have governed B2B hardware marketing for two decades.

What changed in the buyer journey

Forrester's 2026 B2B Predictions report found that 94% of B2B decision-makers used at least one large language model during their 2025 purchase process. 6sense's 2026 Buyer Experience Report adds that buyers complete roughly 70% of their decision journey before they ever fill out a vendor form. The combination is what should worry you. That 70% used to happen across vendor websites, review sites, and analyst reports, all channels you could measure and influence. Now it happens inside ChatGPT, Claude, and Perplexity, where you have no analytics visibility and often no presence at all.

The age curve makes this a permanent shift. Magenta Associates surveyed 300 UK senior decision-makers with B2B purchasing power in 2025. Among buyers aged 25 to 34, 85% use AI tools to research and evaluate suppliers. Among buyers aged 55 to 64, only 23% do. The 25-to-34 cohort is exactly who is getting promoted into procurement manager, senior buyer, and director-of-supply-chain roles right now. By 2028, Gartner projects 90% of B2B buying will be agent-intermediated, with $15 trillion in B2B spend flowing through AI agent exchanges. The transition window is short.

There's a conversion math piece that keeps getting buried in these reports. Loganix synthesized six independent studies in April 2026, including Averi's analysis of 680 million AI citations, Exposure Ninja's conversion benchmark, and SparkToro's 2,961 controlled research sessions. AI search traffic converts at 14.2% on average. Google organic traffic converts at 2.8%. Claude users convert at 16.8%, ChatGPT at 14.2%, Perplexity at 12.4%. The 5.1x conversion advantage exists because AI-referred traffic arrives pre-qualified. The buyer has already validated that you're an option, run a comparison, and decided to look closer. Vercel publicly reported that ChatGPT now drives 10% of new signups. That's a SaaS data point, but the structure applies to industrial hardware too. The buyers who reach your site through AI are the ones already on your shortlist. Everyone else never visits.

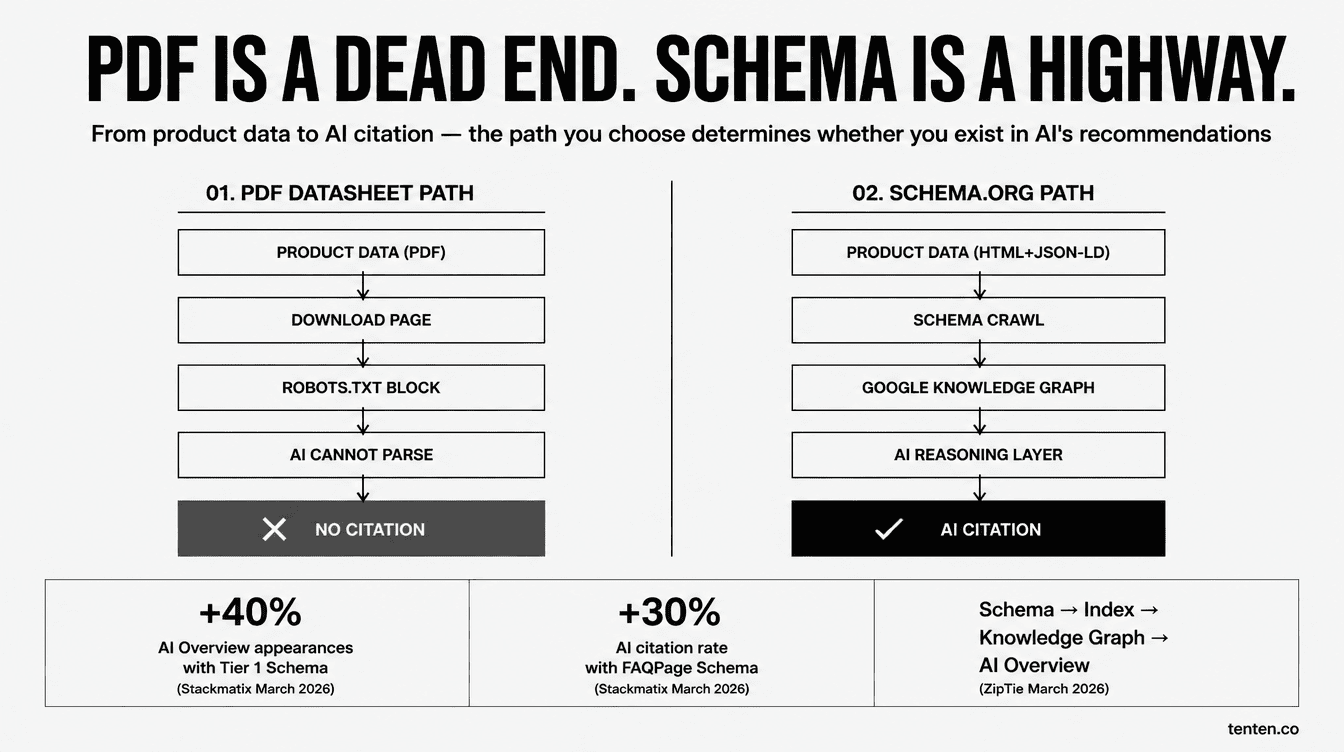

Why PDF datasheets break the pipeline

Industrial hardware companies, especially Taiwan's IPC vendors, have spent thirty years optimizing for the engineer's PDF download. Datasheets are dense, accurate, and beautiful in their own way. They contain the I/O specs, operating temperature ranges, MTBF numbers, certification badges, and ordering guides that engineering buyers actually need. The problem is that AI systems can't use them.

The reason is mechanical. AI search engines build their answers from search engine indexes: Google Knowledge Graph for AI Overviews, Bing's index for Copilot, web-search APIs for ChatGPT and Perplexity. ZipTie's analysis from March 2026 traces the path: Schema → Google Index → Knowledge Graph → AI Overview citation. Schema markup doesn't talk to the AI directly. It populates the database the AI consults. That's why Microsoft's Fabrice Canel confirmed in March 2025 that schema helps Bing Copilot understand content, and why Google's Search team confirmed in April 2025 that structured data provides advantages in search results. The path runs through the index, not through real-time PDF parsing.

Most Taiwan IPC PDF datasheets fail at three points. First, many are exported as flat images or scans, so even the text isn't extractable. Second, most are linked from product pages with no schema-tagged HTML equivalent of the spec table, so the AI sees a download link but no machine-readable spec data. Third, the PDFs themselves contain no JSON-LD. A line like Intel Atom x6425E, 4 GB DDR4, IP65 is just text without context. None of that helps an AI generate a comparison table when a procurement manager in Stuttgart asks "Find me fanless industrial PCs with -40°C operating temperature, IATF 16949 automotive certification, and dual GbE LAN."

Schema.org's ProductModel type was built for exactly this case. The official spec language is unambiguous: "ProductModel gives a datasheet or vendor specification of a product (in the sense of a prototypical description). Use this type when you are describing a product datasheet rather than an actual product, e.g. if you are the manufacturer of the product and want to mark up your product specification pages." The recommendation goes further. Provide gtin13, gtin14, gtin8, mpn identifiers, and link to the manufacturer property, so search engines can use your data to enrich offers found elsewhere on the web. For an IPC SKU like Advantech's ARK-2250L, the mpn field carrying that exact part number is what lets AI distinguish it from neighboring products in the catalog.

The competitive structure for Taiwan vendors

This matters more for Taiwan than for most other regions because of how concentrated the industrial hardware supply chain is here. Advantech's own corporate page reports 41% global market share in industrial PCs and ranks the company #9 on the Top 100 Industrial IoT Companies list. PitchBook's March 2026 data shows Advantech with $9.4B market cap and $2.27B trailing-twelve-month revenue. The Verified Market Reports embedded industrial PC market sizing put the global market at $7.5 billion in 2024, growing to $12.8 billion by 2033 at a 6.8% CAGR. Six of the top eight global IPC vendors are Taiwanese: Advantech, AAEON, ADLINK, IEI, Avalue, NEXCOM. Throw in DFI and Portwell and the share approaches 70% of the world's industrial computing supply.

That market position isn't safe by default. Semiconductor Insight's January 2026 SBC market report shows the top three Taiwan vendors hold a combined 28%, which means roughly 70% of the market is fragmented across 25+ smaller players competing on price. The sub-$200 SBC segment is brutal. The mid-tier Taiwan IPC vendors with NT$1B-to-NT$5B annual revenue (about $30M to $150M USD) sit in the most exposed position. They're not large enough to dominate global brand awareness like Advantech, but they can't compete on Shenzhen prices either. Now they're facing AI recommendation systems that are quietly consolidating the citation slots around whichever vendors built structured data infrastructure first.

The winner-take-most dynamic is what makes the timing urgent. BrightEdge and Amsive's 2025 joint research found that AI platforms cite an average of 3 to 4 brands per response, and the top 20 domains capture 66% of all AI citations in their tracked categories. Once an LLM has been trained to associate "ruggedized industrial PC for AI inference at the edge" with three specific vendors, breaking into that citation slot becomes harder than breaking into a Google top-three ranking. AI models reinforce what they already learned, and that learning is happening through the second half of 2026.

Five structured data investments that actually move the needle

Here's the work that matters, in priority order.

The first is Organization schema with a complete sameAs graph. This is the brand entity in every AI's knowledge layer. Stackmatix's March 2026 research showed sites with complete Tier 1 schema saw up to 40% more AI Overview appearances. Organization schema needs name, url, logo, address, contactPoint, description, plus sameAs linking to LinkedIn, Crunchbase, Bloomberg profile, Wikipedia (if applicable), and Wikidata Q-number. For Advantech-scale companies, the Wikipedia entry already exists but is rarely linked from the corporate site. For mid-market IPC vendors, the Wikidata entry usually doesn't exist, and that's the real problem. They have no anchored entity in the AI's knowledge graph.

The second is full ProductModel schema for every SKU. Each product page needs name, mpn (the part number), gtin if applicable, manufacturer, image, description, additionalProperty (for operating temperature, power consumption, I/O ports, certifications), and offers (even if B2B-only, mark as RFQ with PriceSpecification). Add isAccessoryOrSparePartFor to map accessories to host systems. The work scales: a vendor with 200 SKUs needs maybe 200 hours of one-time schema engineering and a CMS template that auto-generates the JSON-LD from the product database. The payoff compounds. Once Google indexes the schema, AI Overviews and ChatGPT searches start surfacing your specific SKUs in capability-matched comparisons.

The third is FAQPage schema on every product page. Stackmatix's same study found FAQPage schema raised AI citation rates by 30% on average. For IPC products, the natural FAQs are operating environment limits, OS compatibility, certification details, PoE budgets, and integration questions. Your application engineers answer these on customer calls every day. Migrate the answers from internal Confluence pages to public product pages, wrap them in FAQPage JSON-LD with proper <script type="application/ld+json"> tags, and keep each answer to 40-60 words. Engineering pushback is usually low because the content already exists.

The fourth is the hard one: convert PDF datasheets to HTML-plus-schema while keeping the PDF for engineer downloads. The current architecture is "product page → download datasheet PDF." The new architecture is "product page contains the full spec table in HTML with PropertyValue schema for every spec, application diagrams in HTML with HowTo schema, certifications as schema entities, and a PDF download for offline use." This is genuinely 2-4 hours per SKU when you account for proofreading the HTML version against the PDF. Most IPC vendors have CMS systems that can template this, but the data migration is real engineering work, usually 8-12 weeks for a 200-SKU catalog.

The fifth is Article schema with full author E-E-A-T signals. AI recommendation engines weight source authorship heavily. Every white paper, application note, and technical blog post needs Article schema linking to a Person schema with jobTitle, affiliation, sameAs LinkedIn URL, and knowsAbout properties listing the engineer's domain expertise. This runs against Asian B2B publishing conventions where technical content is often anonymous or attributed to "Engineering Team," but AI models prefer verifiable individual authors. Establishing a Chief Technologist persona, building out their LinkedIn presence, and attaching their schema-tagged identity to all technical publications takes 6 to 12 months to show measurable lift in AI citation rates. Once built, it's a defensible asset.

Tools for each layer

The toolchain matters because maintenance cost determines whether the investment compounds or rots:

| Layer | Recommended tools | Best for | Output |

|---|---|---|---|

| Organization + sameAs | Schema App, Yoast SEO Premium, hand-written JSON-LD | Whole company, one-time | Knowledge Graph entity established |

| ProductModel at scale | InLinks, WordLift, custom schema generator with API | 200+ SKU vendors | Per-SKU structured product page |

| FAQPage automation | RankMath FAQ block, Schema App FAQ generator | Tech support + marketing collab | 5-10 FAQs per product |

| HTML datasheet conversion | CMS templates + DAM integration | Engineering docs + web dev | PDF→HTML+schema dual track |

| AI citation monitoring | Profound, Otterly, Ahrefs Brand Radar, Evertune | Marketing analytics | Weekly citation reports |

Ahrefs Brand Radar is the lowest-cost entry point for measurement. Profound has the deepest B2B coverage across ChatGPT, Claude, and Perplexity if budget allows. Evertune's January 2026 research framed the use case clearly: B2B companies use schema to define expertise areas and ensure AI systems understand what problems the brand solves; e-commerce companies use product schema for AI to display price, availability, and ratings. For industrial hardware, you need both modes. Service and capability framing for the OEM/ODM side, product framing for the catalog SKUs.

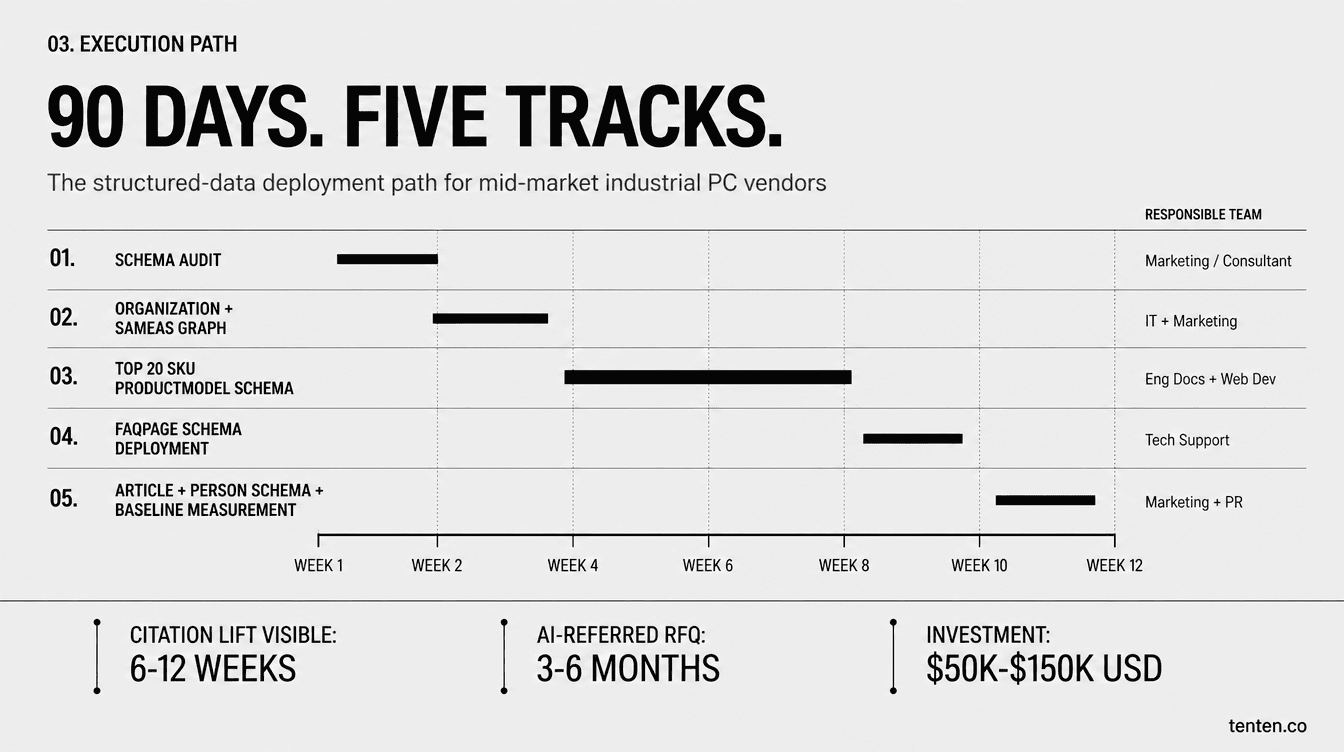

A realistic 90-day rollout

For a Taiwan IPC vendor in the $30M-to-$300M revenue range with 50 to 500 SKUs, here's what 90 days of focused work actually looks like:

| Weeks | Work | Owner |

|---|---|---|

| 1-2 | Schema audit on existing site (Rich Results Test + Schema Markup Validator) | Digital marketing or external consultant |

| 3-4 | Organization schema and sameAs graph; submit llms.txt to GSC | IT + marketing |

| 5-8 | Top 20 SKUs get full ProductModel schema; HTML datasheet conversion starts | Engineering docs + web dev |

| 9-10 | FAQPage schema added to each top SKU (with proper <script> tags) |

Technical support compiles Q&A |

| 11-12 | Article schema + Person schema for technical leads; AI citation baseline measurement | Marketing + PR |

External consultant fees plus internal labor typically come in at $50K-$150K USD for this scope. That's less than a single major trade show booth with international travel. The time window is tight, though. Competitors who started in early 2026 will have a 6-month head start by the time most companies finish reading reports about why this matters.

Where I disagree with the conventional GEO advice

Some honest disagreement with the consultant industry. The llms.txt file has been heavily promoted as a GEO must-have. The actual data is mixed. OtterlyAI ran a 90-day test on a properly implemented llms.txt: out of 62,100 AI bot requests, exactly 84 went to the file (0.1%). Search Engine Land tested llms.txt on its own site from August to October 2025 and recorded zero visits from Google-Extended, GPTBot, PerplexityBot, or ClaudeBot. Google's John Mueller has publicly compared llms.txt to the keywords meta tag, which is a self-controlled signal that search engines learned to ignore decades ago.

But there's a counterexample. Dev5310 submitted their llms.txt directly through Google Search Console's URL inspection tool on February 2, 2026. Google crawled it the same day. The next day, Google's AI Mode started citing it. OAI-SearchBot (OpenAI's background indexer) found it through internal links. Within 18 days, the file was being cited as an authoritative identity layer across multiple AI platforms. The takeaway: llms.txt isn't magic, but a well-structured token-efficient brand summary at a predictable path, submitted directly to GSC, can serve as a useful identity layer. It's a low-cost addition, not the centerpiece.

The bigger disagreement is on schema's direct LLM impact. A widely cited Search/Atlas study from December 2024 found no correlation between schema coverage and AI citation rates. BrightEdge's research found the opposite. Both are right, because they're measuring different things. Schema doesn't talk to LLMs directly. LLMs tokenize JSON-LD and lose its semantic structure. Schema talks to Google and Bing's indexes, which become the data layer that ChatGPT search, Google AI Overviews, and Perplexity draw on. The mental model isn't "schema → AI cites me." It's "schema → my entity is well-defined in Knowledge Graph → AI is more likely to use my data when generating answers → citation rate rises." The path is indirect but durable. Knowledge Graph entity authority is a long-term asset that survives platform turnover.

FAQ

We're an OEM/ODM manufacturer — products ship under customer brands. Does GEO still matter?

Yes, but the structure shifts. You can't lean on ProductModel schema for products you don't sell under your own brand. Pivot to Service schema, Organization schema with hasCredential and makesOffer, marking up process capabilities (surface-mount, wave soldering, specific test capabilities), certifications (IATF 16949, ISO 13485, UL), and industry experience (automotive, medical, aerospace). When a US sourcing manager asks ChatGPT "find me a Taiwan EMS partner with IATF 16949 and 10+ years automotive experience," those schema fields are what AI uses.

Most of our R&D content is confidential. Won't structured data leak proprietary information?

Layer your exposure. Functional specs, certifications, and operating environment data are already public in your downloadable datasheets, so schema-tagging them adds zero new exposure. R&D architecture, process parameters, yield data, and customer lists stay behind NDAs and gated forms. GEO surfaces "what problem you solve," not "how you solve it." The competitive advantage is in capability matching at the discovery stage, not in giving away your IP.

Our website is built on legacy ASP from 2014. How do we shortcut the schema work?

In priority order: (1) Add Organization schema to home, about, and contact pages, about one week of work; (2) Hand-add ProductModel schema to your top 20 highest-revenue SKUs, about 2 hours per SKU; (3) Add 5-question FAQPage schema to each major product page, half a day per product; (4) PDF-to-HTML datasheet conversion is a longer project, plan it as a quarterly initiative. After the first three steps, AI citation rate typically lifts in 6 to 12 weeks.

Should we prioritize the English site or the Chinese site?

English first. International B2B inquiries from outside Greater China come overwhelmingly in English, and ChatGPT and Claude handle English-language schema content more reliably than Traditional Chinese. Build out the English site with full schema and full content depth, then mirror the structure on the Chinese site for the domestic Taiwan market with proper hreflang markup. Don't translate identical content twice. Write each version for its actual audience.

How long until GEO investment converts to actual orders?

The leading indicator (AI citation frequency) moves in 6 to 12 weeks after first schema deployment. The lagging indicator (AI-referred traffic converting into RFQs) typically takes 3 to 6 months. The conversion rate is roughly 5.1x higher than Google organic per Exposure Ninja's March 2026 analysis, so the ROI math is about per-inquiry value rather than total traffic volume. A single AI-referred RFQ for a $50K industrial system has the same revenue impact as 30+ standard organic inquiries.

Further reading

For schema fundamentals, start with SEO Schema Markup: 6 Types for Small-to-Mid Businesses and Featured Snippets and Structured Data. The full GEO/AEO framework is covered in AI Search Optimization: GEO/AEO as the Next Evolution of SEO and Ultimate AI SEO & GEO Checklist for 2026. For citation tracking, 7 AI Overview Citation Monitoring Tools and GA4 AI Traffic Tracking Setup Guide cover the analytics infrastructure.

For Taiwan industrial hardware-specific context, The AI Transformation Guide for Industrial Computer B2B Companies and How Taiwan IPC Brands Use Shopify Plus to Build International B2B Platforms offer complementary angles. The semiconductor side connects through AI Semiconductors: Core of the AI Era and TSMC's Process Roadmap from N3 to 1.4nm. Practical case studies on GEO ROI are in How GEO Drove 1,500 Orders in 3 Days for E-commerce and Turning Shopify into an AI-Recommended Store, different vertical, identical engineering logic.

Authoritative sources

- G2 — The Answer Economy: How AI Search Is Rewiring B2B Software Buying (April 2026)

- PRNewswire — New G2 Research: Half of B2B Software Buyers Now Start Their Research With AI Chatbots

- Loganix 2026 B2B AI Buying Behavior Analysis (via Yahoo Finance)

- Schema.org — ProductModel official specification

Author Insight

I've spent the last six months helping a mid-market Taiwan IPC vendor (about $100M annual revenue) rebuild their structured data infrastructure. The baseline was rough. ChatGPT cited the company in exactly 1 out of 30 industry-relevant prompts. After six weeks of Organization schema and ProductModel schema work covering the top 20 SKUs, ChatGPT citations climbed to 7 out of 30 by week 8. Perplexity went from zero to 4 out of 30 over the same period. The most interesting outcome wasn't the citation numbers, though. The sales team reported that the English RFQs coming in via AI referrals were qualitatively different. Buyers cited specific part numbers, quoted operating temperature ranges, and asked I/O-level technical questions in the first message. AI had pre-qualified the inquiry by feeding the buyer structured information first. Schema isn't just an SEO mechanic. It's reshaping the information density at which buyers enter your sales funnel.

The PDF datasheet itself isn't the enemy. PDFs are the right format for engineers who want to print, archive, or reference the spec sheet on a shop floor. The mistake is treating PDF as the terminal form of product data instead of the export form. The same content needs to live in HTML with structured data first, with the PDF generated as an artifact for human downloads. Mobile-first indexing forced a similar mental shift on Taiwan B2B vendors a decade ago, when desktop sites became responsive sites. AI-first indexing is a bigger paradigm shift on a shorter timeline. The vendors that adapt will own the next decade of category answers.

Schedule a Tenten consultation

Our team has been running schema deployment and GEO monitoring engagements for B2B clients across three Taiwan industrial verticals: semiconductor equipment, industrial PCs, and factory automation systems. From baseline measurement through top-20 SKU schema engineering to AI citation monitoring dashboards, our typical engagement shows first AI citation rate lift around week 12. If you want to talk through what GEO looks like for your specific product lines, book a consultation with the Tenten team. We'll start with a free schema audit and a baseline citation measurement across ChatGPT, Claude, and Perplexity, so you know exactly where you stand before deciding what to fix.